Instead of "Alexa, please start the weather report", techies want the machine to also respond to "Alexa, do I need an umbrella?"

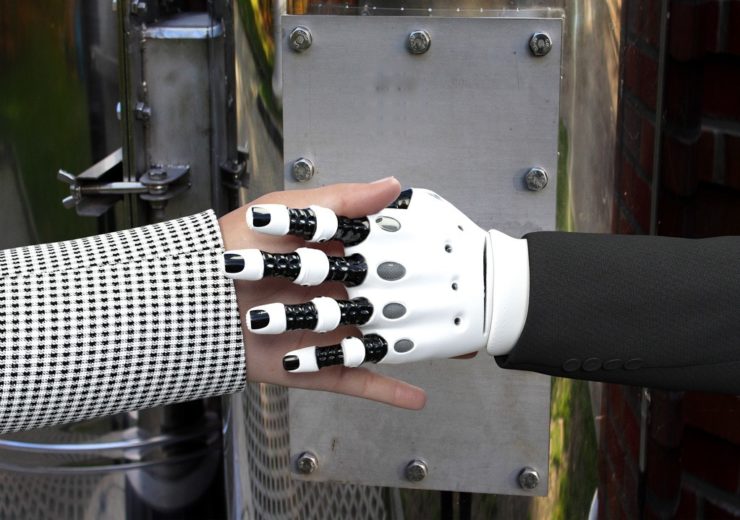

Human-machine interaction requires more empathy and understanding of context from AI bots (Credit: PxHere)

As artificial intelligence becomes an increasingly pervasive part of our lives, German creative and consulting agency Triplesense Reply’s partner Julia Saswito and manager Dan Fitzpatrick look at where we are today and how we can use innovative technology to inject more humanity into human-machine interaction.

This year at the World Economic Forum meeting in Davos, Alphabet CEO Sundar Pichai said that artificial intelligence will be “more profound for humanity than fire and electricity.”

We’re not there yet but AI will be making a big impact on large parts of our society in the coming years. It will also influence our communications and interactive behaviours.

In the B2B sector especially, we already harness the power of AI and robots through chatbots and voice assistants.

But despite the benefits that human–machine interaction can bring, human interaction is far more complicated than AI can deal with.

Machines currently struggle to understand vocal expression, empathy and emotions, which is limiting to companies.

After all, a voice assistant that does not respond to a user’s dialect is as useless as a tool used by the social services that relies on facts and figures to determine whether someone is eligible for aid, but ignores considerations such as illness.

More empathy needed in human-machine interaction

Virtual assistants already hold a place in our lives. They are our message readers, weather forecasters or weekend planners.

In order for these to be perceived less as machines and more as assistants, we urgently need to inject more emotion and empathy into the human-machine relationship.

After all, the more human a machine appears, the more humans will use it.

In all industries, it is important to strike a sympathetic chord with the users of a voice interface.

Other factual criteria, such as product information or prices, are often of secondary importance.

When virtual assistants act empathetically, the level of user acceptance rises.

Providers of artificial intelligence solutions must therefore ensure that their interfaces generate an appealing resonance in the customer’s mind – a principle that applies to almost all AI-controlled communication services, not only in more intimate interactions, such as nursing care for the elderly.

Natural language processing crucial to human-machine interaction

For several years, voice user interface (VUI) designers have been trying to adapt voice interactions to human behaviour.

They aim to create a non-mechanical interaction of the same quality as human-to-human communication.

To achieve this, designers and programmers are trying to develop technical skills and create voice commands that are flexible.

Special attention is given to the pronunciation of foreign words and acronyms.

For example, when Alexa pronounces something like “limoncello” correctly, the user experience is perceived as human.

In technical terms, there is a mark-up language in Alexa’s response controls that should help with this.

This language, speech synthesis mark-up language (SSML), was designed to enhance the monotone expression of the machine with human intonation and modulation: quieter or louder or interjected with “dramatic pauses”.

Programmers use SSML to flag selected terms as Italian, for example, so that these are automatically pronounced with appropriate intonation for the language in use.

Language use also has to be adapted to the language spoken.

When communicating with voice assistants, the user should not be restricted to only use certain commands.

Instead, the user should be able to speak completely freely and naturally.

So instead of “Alexa, please start the weather report”, Alexa should also respond to “Alexa, will it rain today?” or “Alexa, do I need an umbrella?”

Taking vocal distinctions into account in human-machine interaction

Even the most minimal of nuances, such as sarcasm, dialect, slang, regional customs or irony, have a significant influence on the meaning of a statement and how satisfactory communication with a voice assistant is.

Just the intonation in the sentence “very funny, Alexa!” determines whether the joke really matched the user’s sense of humour.

Furthermore, an English person may be irritated to be directed to a recipe for jelly when they were searching for one for jam.

“Sentiment analysts” are the experts in charge of interpreting the tone and distinctions of human language, in order to either respond to the conversation or ask for clarification in the event of uncertainty.

Brand communication via machine

Addressing people at an emotional level is particularly useful when price and data also play a role in decision making.

Product managers and VUI designers should therefore ensure that via this interface, a brand also conjures up an emotive image in the customer’s mind.

The customer is not always convinced about a product through another touchpoint before they order it through Alexa.

The brand and product name, as well as a brief description, should therefore be adapted, not just for the algorithm but also for the consumer.

After all, what do customers prefer to buy? A “mouth-watering combination of crunchy almonds and delicious dried grapes all engulfed in Cadbury Dairy Milk milk chocolate” or a “bar of Cadbury Fruit and Nut”?

Machines must recognise context in human interaction

In many cases, the benefits of AI already outweigh those of human-to-human communication, especially in the service industry.

Thanks to AI, gone are the days of languishing in long calling queues, only to get the wrong person on the other end.

Machines recognise context and can quickly direct a query to the right employee.

They also automatically compare the current issue with previous problems and offer direct solutions, virtually in real time.

Both the customer and the service employees in the company benefit from this.

It is also conceivable that AI in the form of bots may eventually take over multi-channel communications in customer service almost seamlessly, to bring a customer discussion forward, for example.

A service question that is interrupted can be continued from that point the next time contact is made, regardless of where the question was first asked.

The bot starts the conversation not with “How can I help you?” but with “Unfortunately our conversation yesterday on the phone was cut short. Are you still having a problem with your washing machine?”

Of course, using AI is not practical in every service area. It always depends on the industry, scenario and context.

What is considered an immeasurable benefit for a restaurant chain offering table reservations is not necessarily as beneficial for an industrial production systems provider fielding a variety of queries.

From a certain level of information and interaction, a smartphone or Echo Dot becomes a bottleneck.

It is the point where the information and interaction become too complex for AI and require human involvement.

This threshold, known as the “level of confidence”, can be raised continuously with machine learning.

The machine evaluates all interactions between users and the chatbot to constantly improve the interface‘s performance, increase the complexity of AI-controlled tasks and ultimately ease the workload on humans.

For now, there will still be communication cases where even the best VUI fails.

At that point, the conversation should be handed over to a human who can either end the conversation satisfactorily or document the failure, AI can then work on enhancing the knowledge base for future conversations.

The number of use cases, industries and situations in which voice assistants may prove practical are exploding.

Ultimately, the most successful AI will take the emotional aspects of human communication into account, respond to natural human conversation and allow for seamless interaction across multiple touchpoints.